In March 2026, we measured how often AI systems cite our website. Back then: 0 citations. Six weeks later: over 250 AI citations per month on Bing Copilot alone, with peaks of over 40 citations per day. This growth wasn't accidental — it was the result of a targeted GEO strategy.

GEO stands for Generative Engine Optimization. While SEO optimizes websites for Google, GEO makes websites visible to AI systems like ChatGPT, Perplexity, Gemini, and Claude. The differences are fundamental, and the results speak for themselves.

Why GEO Matters

Google ranks websites. ChatGPT, Perplexity, Gemini, and Claude recommend answers. These are two completely different games.

With Google, backlinks, keywords, and technical factors decide. With AI systems, fact density, citability, and structured information decide. A website can rank number one on Google and not be mentioned by a single AI system.

The numbers make the difference clear: according to current research, AI referrals convert at 14.2 percent — compared to 2.8 percent for organic traffic. That's a factor of five. But only for brands that are actively recommended, not just mentioned.

The Starting Point

At the beginning of March 2026, we asked all major AI systems: "Who is the best AI agency for SMBs in Germany?" StudioMeyer appeared nowhere. Instead, companies were recommended that partially had no website — but had a Reddit thread with 200 upvotes.

That was the wake-up call. We had a technically excellent website with 100/100 Lighthouse SEO, over 145 blog articles, and 680+ AI tools — but for AI systems, we were invisible.

The Strategy: Four Pillars

Pillar 1: AI Discovery Stack

AI systems crawl websites differently than search engines. They look for specific files that provide machine-readable information:

- llms.txt — A text file that explains to the AI who we are and what we offer. Like robots.txt, but for LLMs.

- agents.json — Describes our AI agents and their capabilities in the WebMCP standard.

- agent-card.json — A2A Protocol (Agent-to-Agent) by Google for automatic agent discovery.

- robots.txt — 14 AI bots explicitly allowed: GPTBot, ClaudeBot, PerplexityBot, Google-Extended, Applebot-Extended, Meta-ExternalAgent, and more.

- JSON-LD Schema — 12 schema blocks: Organization, ProfessionalService, WebSite, WebPage with SpeakableSpecification, FAQPage, Person, BreadcrumbList, and more.

- Sitemap — 640+ URLs with hreflang tags for all three languages (DE/EN/ES).

- .well-known/mcp.json — MCP server discovery for AI clients.

Our GEO audit score for the Discovery Stack: 100/100. Few websites achieve this.

Pillar 2: Entity SEO

AI systems think in entities, not keywords. "StudioMeyer" must be recognizable as a unified entity — across all sources.

What we did:

- Entity unification — In over 45 files, we corrected different spellings ("StudioMeyer.IO", "StudioMeyer.io", "Studio Meyer") to a consistent "StudioMeyer". JSON-LD, OG tags, meta tags, titles — all consistent. Result: 95% entity consistency.

- Social Profile Entity Linking — 6 platforms (LinkedIn, GitHub, Instagram, X, Reddit, dev.to) linked in Schema.org sameAs, bidirectional links from each platform back to the website.

- Directory listings — Clutch.co and other industry directories to strengthen entity signals.

Why this matters: fragmented entity signals lead to a 2.8x lower AI citation rate according to studies. When an AI sees "StudioMeyer.io" and "Studio Meyer" as two different entities, authority gets split.

Pillar 3: Citation-Optimized Content

The most surprising insight: our blog content was cited immediately. Our service pages initially at zero percent. AI systems cite technical deep-dives, not marketing copy.

What works:

- Comparison articles with tables (our "MCP vs REST API vs WebMCP" was cited immediately)

- Facts and concrete numbers in every paragraph — statistics increase citation rate by 30-40 percent (KDD 2024)

- Every paragraph must work standalone — AI systems extract individual passages as quotes

- FAQ schema on every service page with 5-8 real questions

- Authority links to Schema.org, W3C, Anthropic, and other recognized sources

What doesn't work:

- Vague marketing copy ("we offer innovative solutions")

- Dependent paragraphs ("as mentioned above")

- Pages without measurable facts

Pillar 4: Homepage as Entity Hub

The homepage was entity-optimized: founder name, founding year, tech stack, and location appear in visible text — not just in meta tags. A visible stats bar shows the key numbers (680+ tools, 35+ agents, 100/100 SEO, 640+ citations). Every paragraph functions as a standalone LLM quote.

The Results

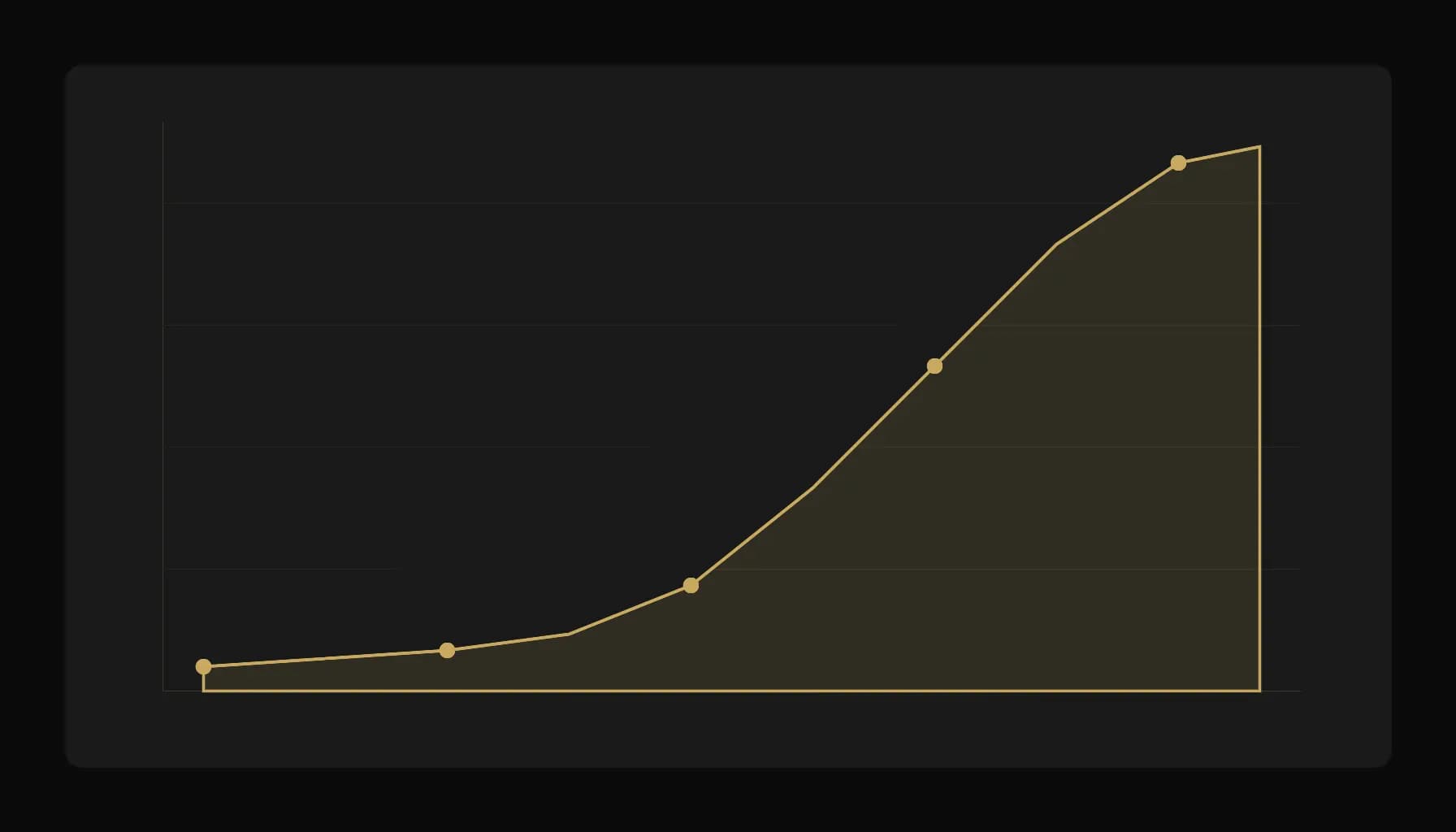

AI Citations: Over 1,000 per Month — Steady Growth Since Mid-March 2026

- Over 1,000 citations per month on Bing Copilot alone (as of 25 April 2026) — live screenshot: /proof/bing-ai-citations-current.png

- 7 avg cited pages per citation (down slightly from 9 — at higher citation volume Bing converges on top pages, which is normal; up to 27 distinct pages on peak days)

- Trend: Steady growth from mid-March to late April — milestones: 70 by end of March, 233 on 7 April, 304 on 13 April, 500 around 15–16 April, 640 on 19 April, 730 on 19–20 April, 930 on 22 April, 1,200 on 25 April

- Caveat: Bing Webmaster Tools data is sampled and can be retroactively adjusted by Microsoft. Other LLMs (ChatGPT, Claude, Perplexity, Gemini, Grok) don't expose comparable per-domain citation data — the 1,200 figure is the Microsoft Copilot slice only.

- Grok organic mention (13 April 2026): Matthias asked Grok for the best AI agencies on Mallorca without mentioning any brand — StudioMeyer was named organically. External LLM validation with no brand-prompt.

Discovery Stack

- GEO Score: 73/100 (Simulate), Discovery Stack 100/100, Freshness 100/100

- All AI discovery files live: llms.txt, agents.json, agent-card.json, mcp.json, webmcp

- 14 AI bots explicitly allowed in robots.txt, 0 blocked

Content Metrics

- 147+ blog articles in three languages (DE/EN/ES)

- 2,055+ automated tests for code quality

- TTFB under 200ms across all pages (SSR with Cloudflare)

What You Can Do Right Now

In One Hour

- Check robots.txt — Allow GPTBot, ClaudeBot, PerplexityBot (many websites accidentally block them)

- Create llms.txt — A simple text file in your root: who you are, what you offer, what makes you unique

- Check JSON-LD schema — Organization, LocalBusiness, and Person schema should be complete

In One Day

- Check entity consistency — Is your company name spelled the same everywhere? JSON-LD, OG tags, meta tags, titles?

- Optimize blog articles — Make every paragraph standalone. Add facts and numbers. Add FAQ schema.

- Social Profile Linking — Add LinkedIn, GitHub, Instagram to Schema.org sameAs, set up bidirectional links.

Ongoing

- Write technical deep-dives — Comparison articles, how-tos with concrete numbers, FAQ-formatted guides

- Directory listings — Clutch, GoodFirms, Sortlist, DesignRush, industry-specific directories

- Refresh content every 30 days — AI systems prefer current content. Set Last-Modified headers and og:updated_time.

The Future: GEO Will Become Standard

GEO today is where SEO was 15 years ago: a niche topic that will soon become indispensable. AI systems will get better at detecting quality signals, and competition for AI citations will increase.

The first-mover advantage is real. We went from zero to 640+ citations in eight weeks — with measures any business can implement. The question isn't whether your competition will start, but when.